Benchmarking is good, actually

Everyone knows benchmarks are flawed. But when you have two weeks to pick a million-dollar platform, flawed data beats no data.

TL;DR: Should you ignore flawed benchmarks? No. Teams get a week or two for platform decisions with multi-year financial consequences, and most migrations fail or exceed their budgets. Imperfect data read with skepticism beats no data at all.

The case against is already well-known

Database benchmarks have problems, and everyone in the industry knows it. Vendor benchmarks serve marketing. Configuration choices can swing results by 10x. Standard benchmarks like TPC-H test workloads that may look nothing like yours. Community benchmarks vary in methodology and rigor.

I'm not going to argue against any of that. It's all true.

But here's the part that gets left out of the critique: in my experience, most data teams don't have the luxury of ignoring benchmarks just because they're flawed. Someone has to make platform decisions, those decisions have real financial consequences, and the people making them need some kind of comparative data to work with.

I'll make the case in three steps: why teams can't benchmark everything themselves, why migration mandates make comparative data non-optional, and how to read benchmark results without getting fooled.

The two-week platform decision

The advice "just benchmark it yourself" sounds reasonable until you look at how platform decisions actually happen.

Most organizations get a week or two for platform selection before committing to implementations that span 12-36 months1. A widely cited Gartner finding from 2009 put migration failure or major-overrun rates at 83%2. It's old, but still one of the most commonly referenced baseline figures for migration risk.

The data engineer investigating Snowflake alternatives is usually the same person maintaining the current Snowflake deployment and responding to production incidents. "Run comprehensive benchmarks yourself" competes directly with "keep the business running." For most organizations I've worked with, self-benchmarking at production quality is simply not practical.

The configuration trap

Even when teams find time to evaluate platforms, they run into a subtler problem: properly configuring each platform is genuinely hard. Snowflake configuration decisions differ radically from DuckDB, which differs from BigQuery. Compute sizing, memory allocation, parallelism settings, query patterns that trigger materialization; each platform has its own arcane lore.

I've seen Postgres benchmarks that forgot to create indexes. ClickHouse tests running with outdated engine choices. Spark memory settings that force unnecessary spills. Snowflake comparisons using XS warehouses against a competitor with 10x more compute. The difference between "20% slower" and "10x faster" can come down to a single configuration choice.

This is the trap: without external benchmarks, teams unknowingly run suboptimal configurations on the platforms they're less familiar with, which is precisely the platforms they're evaluating. The resulting comparison reflects configuration skill rather than product capability.

What this looks like in practice

The scale involved is striking. One healthcare organization maintained Teradata for 20+ years, serving 5,000+ users across 25 lines of business. The cloud migration ultimately saved $140M annually, but required migrating every user and workload to validate the new platform could handle production load3. Another Teradata-to-Snowflake migration involved 700+ query translations over 8 months before achieving 60% cost reduction and 85% query efficiency improvement4.

These are partner and vendor-adjacent case studies, so I treat them as directional evidence rather than universal outcomes.

This tracks with every migration I've worked on. Parallel validation, running legacy and new platforms simultaneously, is standard practice for good reason5. These decisions evolve iteratively based on ongoing measurement, not one-time evaluation. Some data comes from internal testing, some from external sources. But "ignore all benchmarks because they're imperfect" was never a viable option.

When someone else picks your database

Sometimes the platform decision isn't yours to make. I've been on the receiving end of these mandates more than once.

"We're moving from AWS to Google Cloud." "We're consolidating to Databricks." "We're replacing Teradata with a modern cloud warehouse." These decisions get made by executives balancing factors you may not see: vendor relationships, strategic partnerships, existing contracts, real estate costs. The mandate arrives as a fait accompli. Your job isn't to choose the best platform; it's to execute the migration.

But you still need to understand the cost-performance tradeoffs of what you're moving to. That need doesn't go away just because the choice was made above your pay grade.

This happens more often than the industry admits. 63% of IT decision-makers accelerated their cloud migration efforts in 2024, up from 57% the prior year6, and the pace shows no sign of slowing. My expectation is that AI coding agents will further compress migration timelines, which increases pressure to make platform decisions faster.

The CFO question

Without benchmarks, the CFO asks "Why move to GCP?" and you answer: "Lower cost per compute." But I've learned the hard way that configuration matters enormously. BigQuery on-demand pricing differs dramatically from Flex slot pricing, and Snowflake warehouse sizing creates order-of-magnitude cost differences for identical workloads7. Without comparative data, you're guessing.

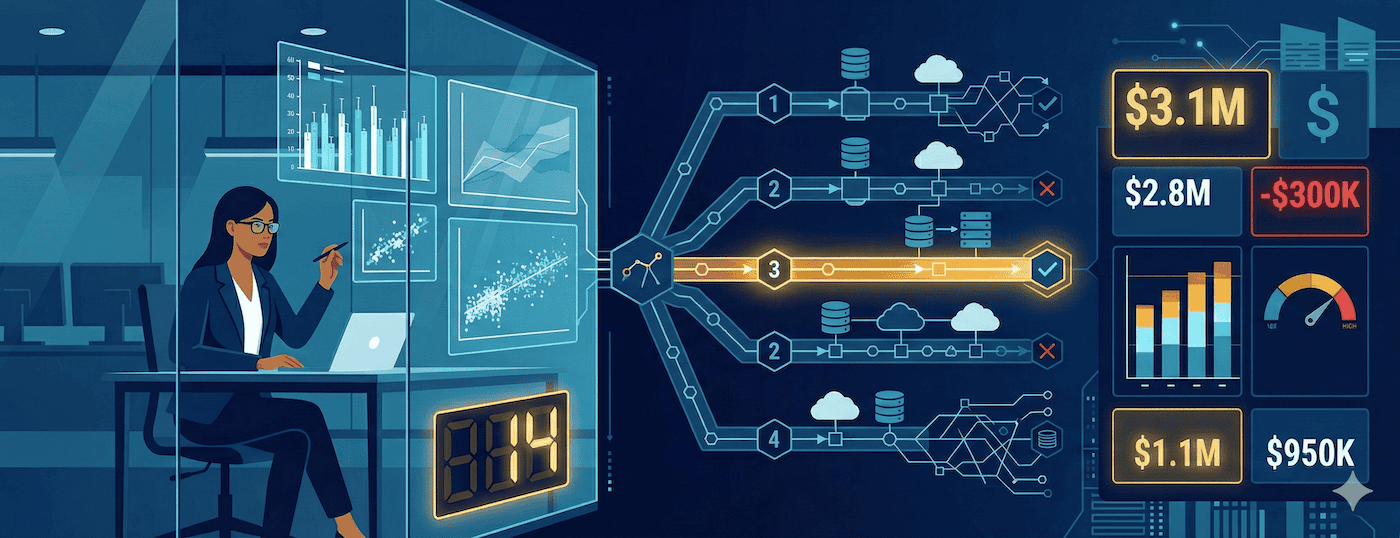

With benchmarks, the same question gets a better answer: "Platform A is 40% cheaper at equivalent performance for our average query size, based on independent testing. The lock-in cost of switching is justified by $X million savings over 3 years."

The data may be imperfect, but it's data. And when you're asked to justify a platform that costs millions annually, having external data points, even flawed ones, is the difference between a knowing nod and a very uncomfortable meeting. I know which one I prefer.

Cloud spend demands justification

Cloud spending reached $723.4 billion in 20258. At that scale, "because we've always used it" doesn't survive a finance review. Every year, finance asks the data team: "Why do we spend $X on Platform Y?" They expect performance and cost data. In many organizations, failing to provide that evidence weakens the data team's budget credibility.

The commitment decisions keep getting harder. Cloud platforms offer 25-40% savings for 1-year commitments and up to 55-72% for 3-year commitments9, but multi-year commitments lock you in. If your data volume grows 50% year-over-year and independent data suggests your workload would run 30% faster on a competitor, that three-year commitment is a fraught decision. Without external data points, you're extrapolating blindly.

Fifty databases and a deadline

The vendor landscape is chaotic. Snowflake, BigQuery, Redshift, Databricks, ClickHouse, DuckDB, Firebolt, StarRocks, SingleStore, Yellowbrick; the list grows every year. Each claims superiority. Nobody can realistically evaluate all of them.

In practice, benchmarks are the most scalable comparative filtering mechanism I've found. "This platform consistently ranks in the top tier for analytical workloads." "This platform excels at real-time ingestion but struggles with complex joins." Pattern recognition across multiple benchmarks, even imperfect ones, helps narrow 50 options to 3-5 candidates worth deeper evaluation.

You can't run comprehensive tests on 50 platforms. You can read 50 benchmark reports in an afternoon. I do this regularly, and the patterns are surprisingly consistent across independent sources.

And increasingly, the people making these decisions aren't database specialists. A Gartner projection, as quoted in a Google Cloud summary of the 2024 Magic Quadrant, says 75% of DBMS purchase decisions will be made by business domain leaders by 2027, up from 55% in 202210. For a domain leader, "Platform A is 40% faster and 20% cheaper for workloads like yours" is actionable in a way that "columnar storage with vectorized execution" simply isn't. Benchmarks, despite their flaws, make cross-platform differences visible to the people who increasingly make the calls.

How to read benchmarks without being fooled

So yes, benchmarks have real problems. Vendor benchmarks serve marketing. Configuration choices skew results. Standard workloads may not match yours. All valid.

But "benchmarks are flawed" and "benchmarks are worthless" are different claims. The first doesn't imply the second. Restaurant reviews are written by people with opinions, biases, and incomplete information. Reviews are still useful. You just read them with appropriate skepticism.

When I evaluate a benchmark report, I use a four-step filter:

Discard benchmark reports that hide basic configuration, hardware, or workload details.

Discount vendor-funded claims unless independent reports show similar ranking patterns.

Compare at least three sources and look for directional consistency, not tiny deltas.

Validate finalists on my own workload before making a commitment.

Then I ask four questions:

Who funded this? Vendor-funded means skeptical. Independent means more trust.

What configuration? Default settings favor some platforms, penalize others. Optimized settings may not reflect your team's capability.

What workload? OLAP benchmarks tell you nothing about OLTP. TPC-H tells you nothing about JSON processing.

What hardware? Cloud instance generation matters. On-prem versus cloud matters.

If three independent sources say Platform A is faster for analytical workloads, that's meaningful signal. If only the vendor says it, I discount heavily. Patterns across benchmarks are more reliable than individual results.

My advice: use benchmarks for initial filtering, reducing 50 options to 3-5 candidates. Then run your own tests on the finalists. Smaller scope, but matched to your reality. And factor in non-performance variables: cost, operational complexity, ecosystem, lock-in risk. Speed isn't everything.

Conclusions

I've spent my career watching data practitioners navigate real constraints: a week or two to make platform decisions with multi-year financial implications, accountability for cloud spend in the millions, corporate mandates that remove choice but still require cost-performance analysis, and 50+ platform options that can't all be evaluated in depth.

Benchmarks are how practitioners navigate these constraints, imperfect as they are. The solution isn't to ignore them. It's to use them with appropriate skepticism, corroborate across sources, and supplement with targeted testing on your actual workload.

The critique of benchmarks should drive demand for better benchmarks. That's what I'm building with Oxbow Research: open methodology, published data, no vendor funding.

If you're evaluating platforms this quarter, here's a practical starting point:

Pick 3-5 candidates using independent benchmark patterns.

Run a scoped in-house test on your top 2 workloads.

Present a short cost-performance tradeoff memo before committing.

Footnotes

Enterprise Data Warehouse: A Full Guide for 2025 - ScienceSoft. Decision timeline research: goals elicitation 3-20 days, tech stack selection 2-15 days, business case creation 2-15 days, implementation 6-12 months.

Gartner, "Risks and Challenges in Data Migrations and Conversions" (G00165710, 2009). This widely cited 83% migration failure/overrun figure is nearly two decades old, but remains the most commonly referenced stat in the field. See also The Research Is Clear: Too Many Migration Projects Fail - Curiosity Software, which surveys multiple migration failure studies with similar findings.

From Teradata to the Cloud: Building a Future-Ready Data Foundation While Saving $140M - Persistent Systems Client Success Story.

Teradata Migration: A Step-by-Step Guide - Hakkoda. Case study: 700+ queries migrated, 8-month engagement, 60% cost reduction, 85% query efficiency improvement, 16x faster testing.

Data Warehouse Migration Best Practices - Databricks Documentation. Parallel validation is a recommended practice across major cloud platform migration guides.

Cloud Computing Study 2024 - Foundry (formerly IDG), August 2024. Survey of 821 global IT decision-makers: 63% accelerated cloud migration in 2024, up from 57% in 2023.

BigQuery Pricing and Snowflake Pricing - Official vendor documentation. Pricing varies by compute model, commitment level, and workload patterns.

90+ Cloud Computing Statistics: A 2025 Market Snapshot - CloudZero, citing Gartner. Global cloud spending $723.4B in 2025.

See AWS Savings Plans, Google Cloud Committed Use Discounts, and Azure Reservations. Discount ranges vary by provider, commitment length, and payment structure.

2024 Gartner Magic Quadrant for Cloud Database Management Systems - Google Cloud Blog, December 2024. Gartner projection (as cited): 75% of DBMS decisions by business domain leaders by 2027.