Why database benchmarks are broken

A 2018 study examined 16 published database benchmark papers. Not one reported enough information to reproduce the results.

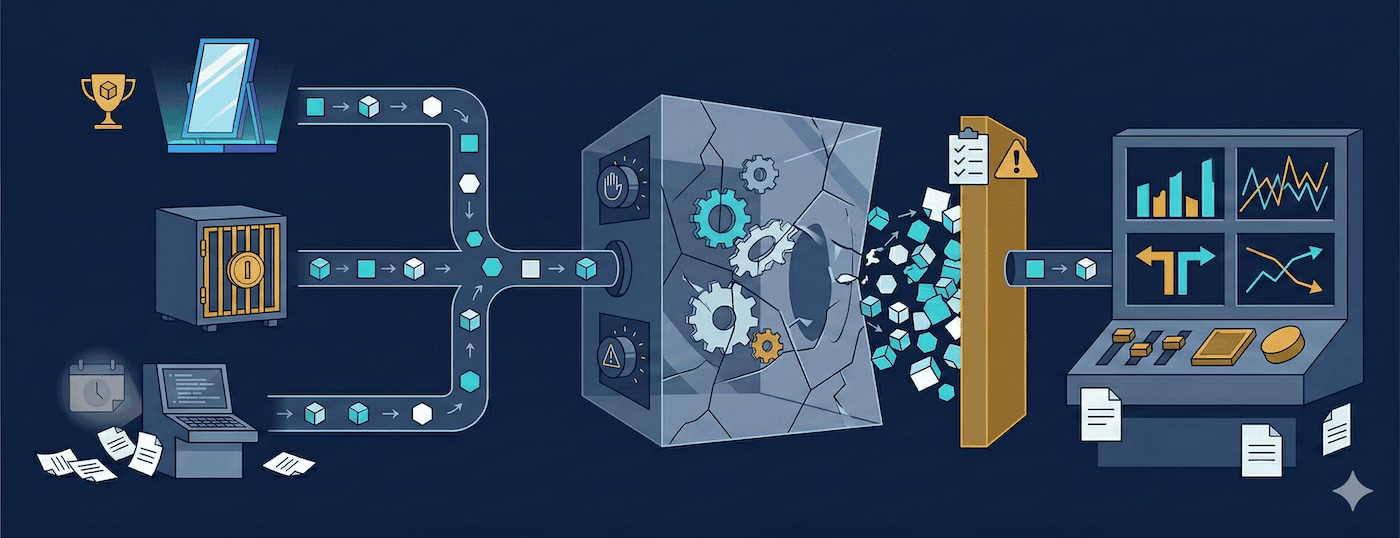

TL;DR: Can you trust database benchmarks? Mostly no. Vendors test their own homework, TPC audits cost $100K, and community benchmarks freeze the day they're published. If you're picking a platform this quarter, the data you need barely exists.

When the test-taker writes the test

Every data platform vendor claims to be the fastest. Browse their benchmark pages: I have yet to find one where the vendor's own product doesn't win. Vendor benchmarks aren't measurement, they're marketing with numbers.

This matters because platform decisions involve real money. In my experience, a mid-size company spends $500,000 or more annually on their data platform. Enterprises can easily reach $5 million. The cloud data warehouse market is estimated at $12 billion and growing at over 25% annually1, and finance teams are asking harder questions about every line item. Making the wrong choice based on misleading benchmarks means wasted spend, painful migrations, and missed opportunities. Yet the data available to inform these decisions is terrible.

Vendor benchmarks have an obvious problem: the company selling the product is measuring the product. The incentives are misaligned from the start.

But the problems go deeper than simple bias. A 2018 peer-reviewed study examined 16 published database benchmark papers and found that none reported the parameters necessary to interpret their results2. One configuration parameter alone changed transaction throughput by a factor of 28. Vendor benchmarks systematically distort comparisons in ways that make the numbers essentially meaningless.

Configuration optimization:

The most common distortion is asymmetric tuning: benchmarking your own product with expert configuration while running competitors on defaults. A skilled engineer can often double or triple performance on any database through careful configuration: index selection, memory allocation, parallelism settings, query hints. When a vendor shows "10x faster than the competition," the real comparison is often "our best versus their default."

These optimizations require expertise that typical users don't have. The vendor's benchmark team has deep knowledge of their own product. They know which configuration knobs matter. Competitors' products? They might spend an afternoon reading docs. The resulting comparison tells you more about configuration skill than product capability.

Workload selection:

Every database architecture has strengths and weaknesses. Columnar databases crush analytical aggregations but struggle with point lookups. Row stores handle transactions beautifully but choke on full-table scans. In-memory systems win on small datasets but hit limits at scale.

Vendors choose benchmarks that highlight their strengths. A columnar vendor runs TPC-H (analytical queries). A transactional vendor runs TPC-C (OLTP). Each shows impressive numbers on their chosen workload. Neither mentions the workloads where they lose.

The phrase "TPC-H isn't representative of real workloads" appears suspiciously often in marketing from vendors who perform poorly on TPC-H. Workload representativeness only becomes a concern when the benchmark is unflattering.

Hardware asymmetry:

Cloud benchmarks add another variable: instance selection. Vendors run their product on premium instances, the latest generation, maximum memory, fastest storage. Competitors run on whatever was convenient. The resulting performance gap reflects hardware choices as much as software efficiency.

Instance selection is particularly insidious because the differences aren't obvious. An r6i.8xlarge and r5.8xlarge sound similar. Both have 32 vCPUs and 256GB RAM. But the r6i has newer processors, faster memory bandwidth, and better storage performance. Small differences in instance generation compound across queries.

Methodology opacity:

The most damaging practice is simply hiding methodology. "Internal testing" with no details. "Optimized configuration" with no specifics. "Contact us for more information" instead of published scripts.

When a vendor can't or won't share exactly how they produced their numbers, the numbers are worthless. Without published methodology, you can't reproduce the results, validate the claims, or even understand what scenarios the benchmark represents. Independent verification becomes impossible.

These aren't theoretical concerns. In November 2021, Databricks published an official TPC-DS result at 100TB, audited by the TPC council3. Snowflake responded with its own unofficial comparison claiming similar price-performance. Databricks challenged Snowflake's methodology. The dispute played out across competing blog posts and Hacker News threads, illustrating exactly the problem: even when one vendor publishes audited results, a competitor can muddy the water with unofficial claims that can't be independently verified.

$100,000 to publish a number

TPC (Transaction Processing Performance Council) benchmarks represent the gold standard for rigor. TPC-H and TPC-DS define precise specifications, exact queries, data generation procedures, and validation requirements. Published results require independent audits. Full disclosure is mandatory. The methodology is exactly right.

But almost nobody uses it. Certification costs $100,000+4, which prices out open-source projects and smaller vendors entirely. And even vendors who can afford it face a perverse incentive: publishing only makes sense if you're confident competitors won't beat your result quickly. Spend six figures on a number that gets topped next quarter, and you've just proved you're second-fastest. Most vendors never publish, or publish once, claim the record, and never update. You can compare Oracle to Microsoft using official TPC numbers, but you can't compare either to DuckDB or ClickHouse.

The queries themselves remain genuinely useful. TPC-H's 22 queries test joins, aggregations, and sorting, the foundational operations that still dominate analytical workloads. TPC-DS adds 99 queries that stress query optimizers significantly harder, covering subqueries, window functions, and complex join patterns. These are the operations that separate fast platforms from slow ones. No single benchmark covers everything (modern workloads also include JSON processing, ML feature engineering, and time-series analysis), but TPC-H and TPC-DS cover the analytical core well.

Then there's the lag. TPC results represent a point in time. The audit process takes months. By the time results are published, the tested product version may be outdated. For cloud platforms that update continuously, published results may not reflect current performance.

The TPC council does important work. Their methodology rigor is exactly right. The problem isn't the benchmarks themselves, it's that the cost and time requirements make them impractical for most comparisons.

Enthusiasm without governance

Individual practitioners and community members run their own benchmarks to fill the gap. Mark Litwintschik's tech.marksblogg.com publishes detailed database comparisons. ClickBench, maintained by ClickHouse, provides a single-table benchmark5. H2O's db-benchmark tests dataframe operations6. Countless blog posts share individual experiences.

This work is valuable. Community benchmarks provide data points that would otherwise not exist. But they have structural problems that limit their usefulness.

Methodology varies wildly. One person runs DuckDB on a laptop. Another runs Snowflake on a Large warehouse. Someone else tests PostgreSQL with default settings while tuning ClickHouse aggressively. Two people testing the same platforms can produce opposite rankings, and neither is wrong; they just measured different things.

Results go stale immediately. Someone runs tests, publishes results, and moves on. The platforms improve. New versions ship. But five-year-old benchmark posts still appear in Google searches, citing platform versions that no longer exist. There's no systematic process for keeping results current.

Expertise is unevenly distributed. Properly benchmarking a data platform requires deep expertise: configuration options, query optimization, hardware characteristics, statistical analysis. Few individuals have this across multiple platforms. A PostgreSQL expert benchmarking ClickHouse might miss critical configuration options. The results then reflect tester expertise rather than product capability.

Nobody owns the errors. When community benchmarks contain errors, there's no correction mechanism. Vendors can point out problems, but doing so looks defensive. Bad data persists because no one is responsible for accuracy.

The people doing this work are filling a real gap with limited resources. Good intentions can't compensate for missing infrastructure: independent benchmarking without governance produces unreliable results.

Million-dollar decisions, dollar-store data

The consequences compound. Data teams facing platform selection have too much information and too little clarity. Vendor benchmarks all claim victory. Community benchmarks contradict each other. Academic benchmarks exclude half the options. The rational response is often to ignore external benchmarks entirely and run your own tests, but proper benchmarking takes weeks or months of engineering time. Most teams can't afford this, so they make decisions based on reputation, sales relationships, or which vendor bought them the nicest dinner.

When a team picks a platform based on benchmark claims that don't reflect their actual workload, they discover the gap after committing to integration, training, and dependencies. Enterprise migration projects routinely run into the millions7.

The prevalence of misleading benchmarks breeds cynicism. "All benchmarks are marketing" becomes received wisdom, and teams stop paying attention to performance data entirely.

This cynicism is understandable but harmful. Real performance differences exist between platforms. Some platforms genuinely are 10x faster for certain workloads. Dismissing all benchmarks means missing genuine optimization opportunities. New data platform vendors struggle to compete on credibility at all: established players have years of TPC results, marketing campaigns, and analyst relationships, while a startup with a genuinely better product cannot simply prove superiority because the benchmarking landscape is too broken to support credible claims. The market rewards marketing spend rather than engineering excellence.

Something better

The information available for platform decisions, one of the most consequential technical choices an organization makes, is unreliable. In a previous post, I argued that flawed benchmarks still beat no benchmarks. I stand by that. But "better than nothing" is a low bar, and practitioners deserve actual methodology, not just less-bad marketing. Fixing this requires independence from vendor funding, open methodology anyone can reproduce, and ongoing maintenance instead of one-time publication. I think that's buildable. In fact, I'm building it.

References

TPC Policies - Transaction Processing Performance Council. Full benchmark certification requires third-party auditing and TPC membership.

db-benchmark - H2O.ai database-like operations benchmark

ClickBench - ClickHouse single-table benchmark

Cloud Data Warehouse Market Size & Share Analysis - Mordor Intelligence. Estimated at $11.78B in 2025, growing at 27.64% CAGR to $39.91B by 2030.

From Teradata to the Cloud: Building a Future-Ready Data Foundation While Saving $140M - Persistent Systems. One healthcare company's Teradata-to-cloud migration involved 5,000+ users across 25 lines of business.

Mühleisen et al., Fair Benchmarking Considered Difficult: Common Pitfalls In Database Performance Testing - DBTEST 2018. Examined 16 benchmark papers; none reported sufficient parameters for reproducibility.

Databricks Sets Official Data Warehousing Performance Record - Databricks, November 2021. Official TPC-DS 100TB result: 32.9M QphDS. Full disclosure report. See also Databricks' response to Snowflake's counter-claims and Snowflake's rebuttal.